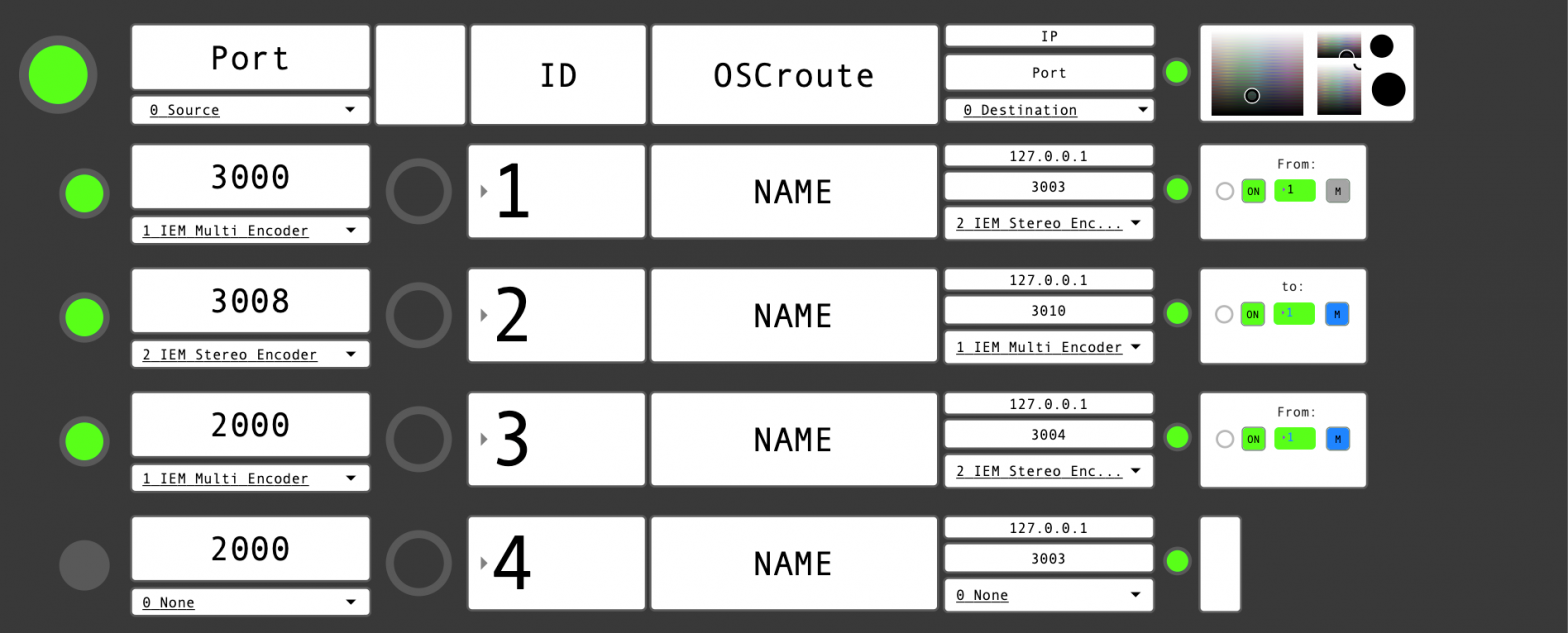

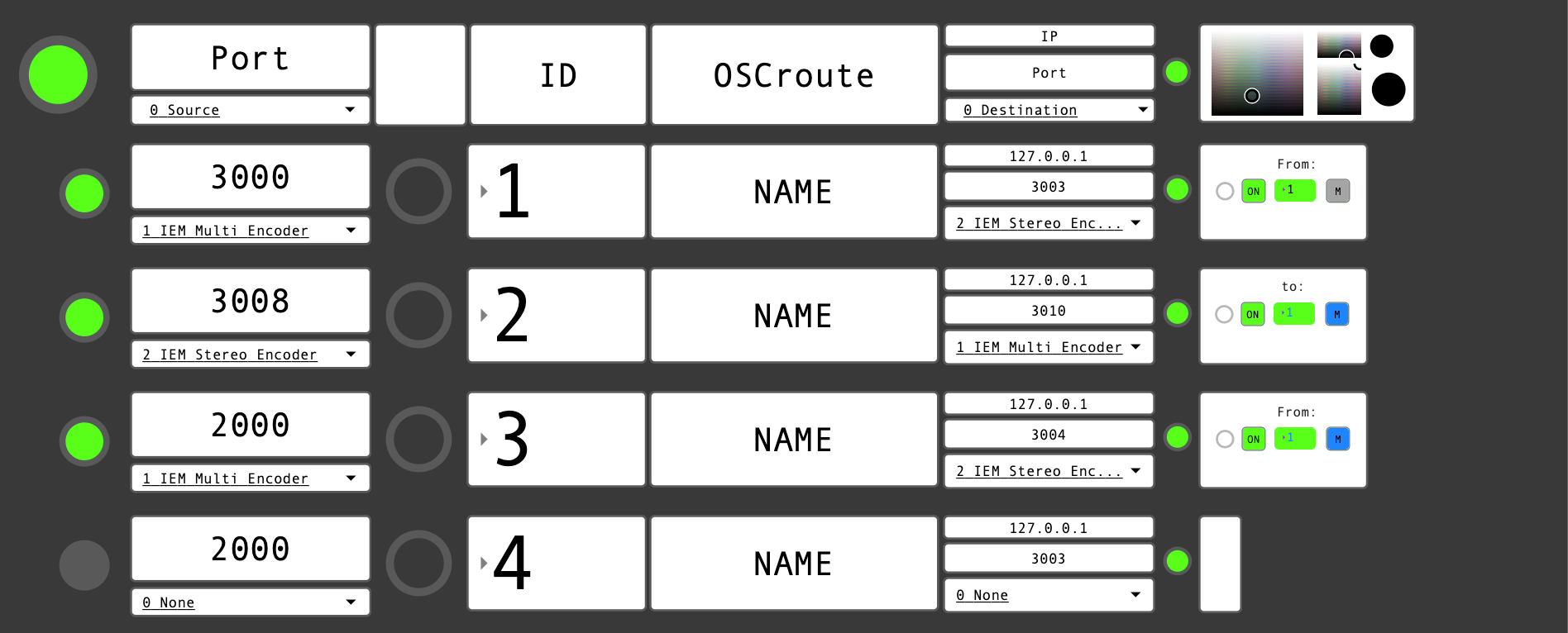

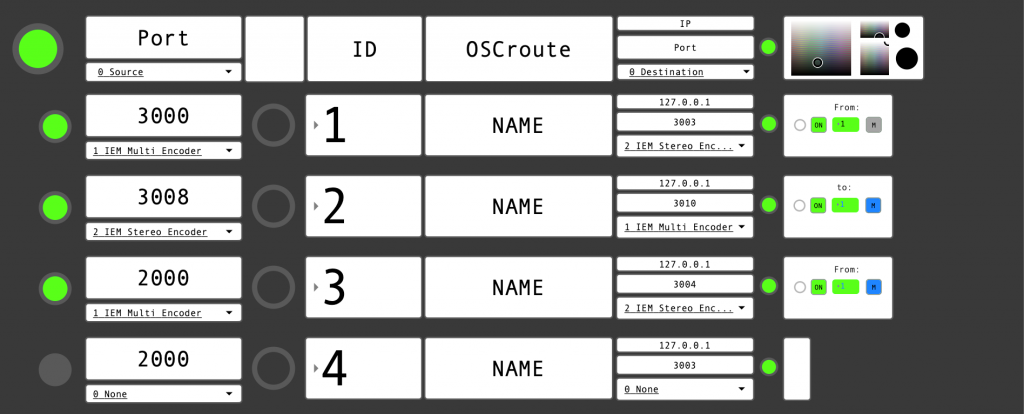

OSC Routing

Matrix

Last year the Spatial Media Lab worked with simulacro.xyz to develop a software prototype for the OSC Routing Matrix. The OSC Routing Matrix is a software middleman for routing OSC bi-directionally between multiple machines. The reason we started working on this is because we’ve experienced several situations where OSC is needed in a setup, but each time a custom setup is required for each new space and configuration. We wanted to create a standalone visual interface for communicating via OSC between multiple machines that is modular, easy to setup, and templates can be created and stored. Of course, as with everything we do at the Lab, we made it open-source, check it out here: https://github.com/Spatial-Media-Lab/OSC_Translator_Matrix

How this came about:

The Spatial Media Lab was working with sound artist Zoë McPherson at one of her installations. Later she introduced Timo with Maximillo Fried and Javier Rojas who were also working with her at her Cohabitation installation in the same city. At the other event, Maxi, Javier, and Studio DB worked together to handle the technical setup and installation of a 14.2 sound system and supporting the artist in preparation for the installation. After this, the Spatial Media Lab spent ca. 2 months with Javier and Maxi to create a working prototype of the OSC Routing Matrix for three of the IEM plugins—StereoEncoder, MultiEncoder, and EnergyVisualizer.

How it works

The software is based on wrappers which contain all of the required incoming data, with syntax prepared, so once the IP and port is established the data is already flowing into the Matrix. Next the Matrix translates this syntax into a custom standard that the Spatial Media Lab is in the process of creating which is based on the newly created ADM-OSC standard created by SPAT. Finally an output wrapper is chosen, which re-translates the data back into the required syntax and values system.

How it was made

Maxi started making the product using JavaScript through a webserver, but it ultimately caused the software to not be performant enough and this was then put on hold. Maxi has worked with JavaScript for many many years so he started there because there are several open source libraries that helps the development process. He started with and OSC JS library, and setup a websocket in a browser to open the ports and listen to incoming messages via OSC. He also started with the IEM plugins, and it was working both sending and receiving but he did not have enough free time at the end of the year that caused the project to get put on hold. Additionally there was a latency between the software to the webserver to the client but he is unsure where this was introduced. Now at the beginning of this year he has more time to start working on this again and pick this back up.

Javier started working in Max/MSP as this is his primary coding environment. It took several weeks before he solved the problem of packing the information together, and wrapping it all into one thing. Once this was understood we took off immediately. Javier needed to use odot from CNMAT (my alma mater) to understand how to pack the information together. It was also crucial that there is a common language in-between that simplifies the process and unifies all the data so that it can be transmitted between. IEM MultiEncoder and StereoEncoder working bi-directionally and the EnergyVisualizer simply routes data. All the sources and interchangeable when sending from the MultiEncoder to the StereoEncoder.

What’s Next?

Currently we’re focused on connecting audio and visuals together with the Matrix and have just finished a wrapper for Unreal, which has not yet been pushed. Next up is TouchDesigner. Please reach out if you’d like to help!

Links

https://www.simulacro.xyz/projects/osc-routing-matri

https://www.javier-rojas.xyz/projects

https://github.com/jvtrojas/OSC_Translator_Matrix